MECHE CONNECTS

The Breadth of MechE

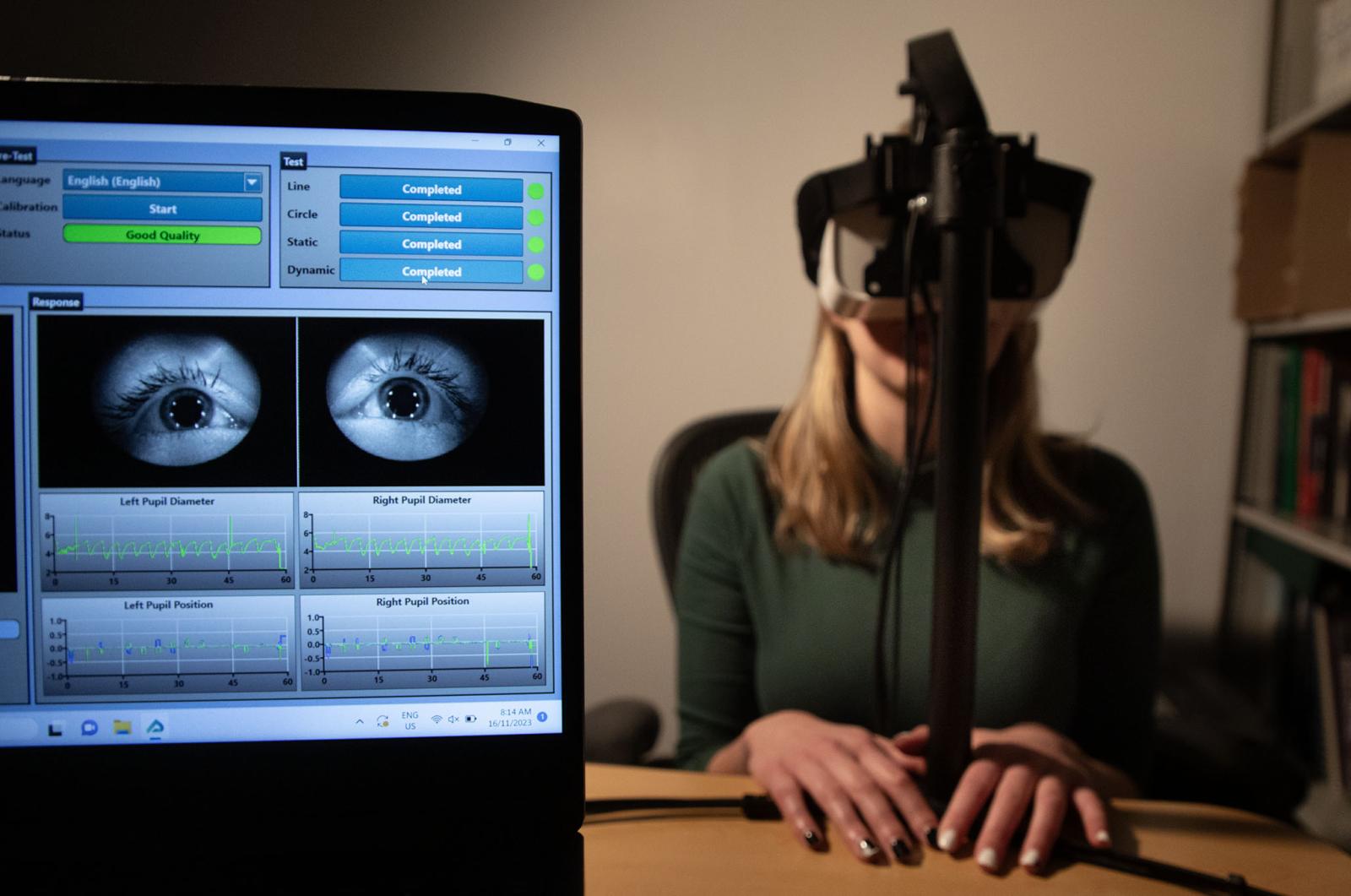

Mechanical Engineering has expanded from its traditional areas to encompass many emerging technologies, ideas, and principles. This breadth allows our department to confront multidisciplinary challenges, while embracing the core principles that have always defined MechE... It would be impossible to showcase every facet of our work, or every contributor, but through the stories and highlights in this issue, we invite you to celebrate the breadth of MechE at MIT.

- Department Head A. John Hart.

MechE Connects Video

Across MechE, faculty and staff are engaging in cutting-edge research at the frontiers of mechanical engineering, with teaching and exploration at the intersection of engineering and physics, math, electronics, biology, computer science, and many other fields of study. From the nanoscale to the macroscale, across communities, climates, regions, and geographies, and from the ocean floor to the far reaches of space, there’s an incredible breadth of work underway in MechE at MIT.